xpose

X.POSE

In today's data-driven society, individuals carrying smartphones and interacting with social media networks have agreed, often without conscious consideration, to policies that grant service providers explicit rights to harvest and utilize personal data on a massive scale. These massive companies offer "free" services for a value exchange of our data.

There currently exists a paradox in our internet culture. As a generation, we are simultaneously obsessed with publicity and privacy. While we publish and post details about our lives online, at the same time we demand the most advanced privacy protection software. An unprecedented degree of potential exposure comes with the current mode of existence.

We have ceded control of our data emissions and based on activity logs. By participating in this hyper-connected society while having little to no control of my digital data production, how much of myself do I unknowingly reveal? To what degree does the aggregated metadata collected from me paint an accurate portrait of who I am as a person? What aspects of my individuality are reflected in this portrait?

x.pose is an exploration of these questions. Since we have already ceded control of our digital data emissions, x.pose goes a step further to broadcast the wearer's data for anyone and everyone to see.

Production

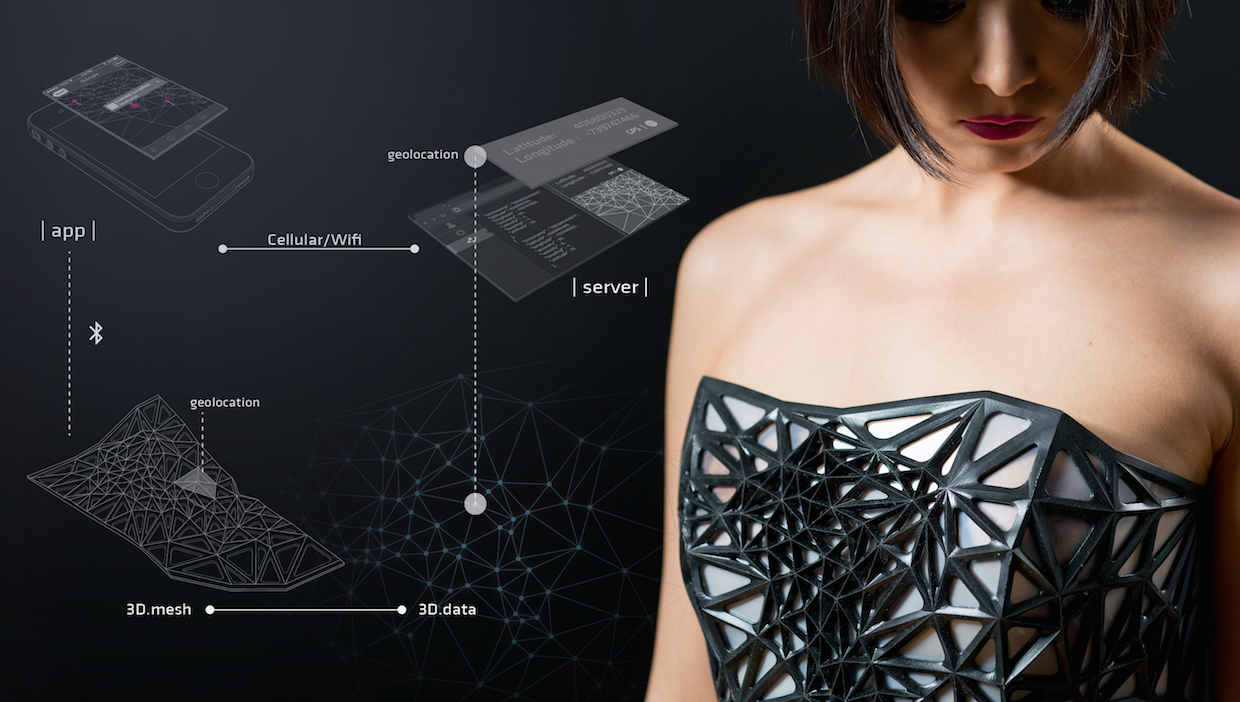

The first step was to build a mobile app and server to automatically collect my data over time. Done using Node.js and PhoneGap.

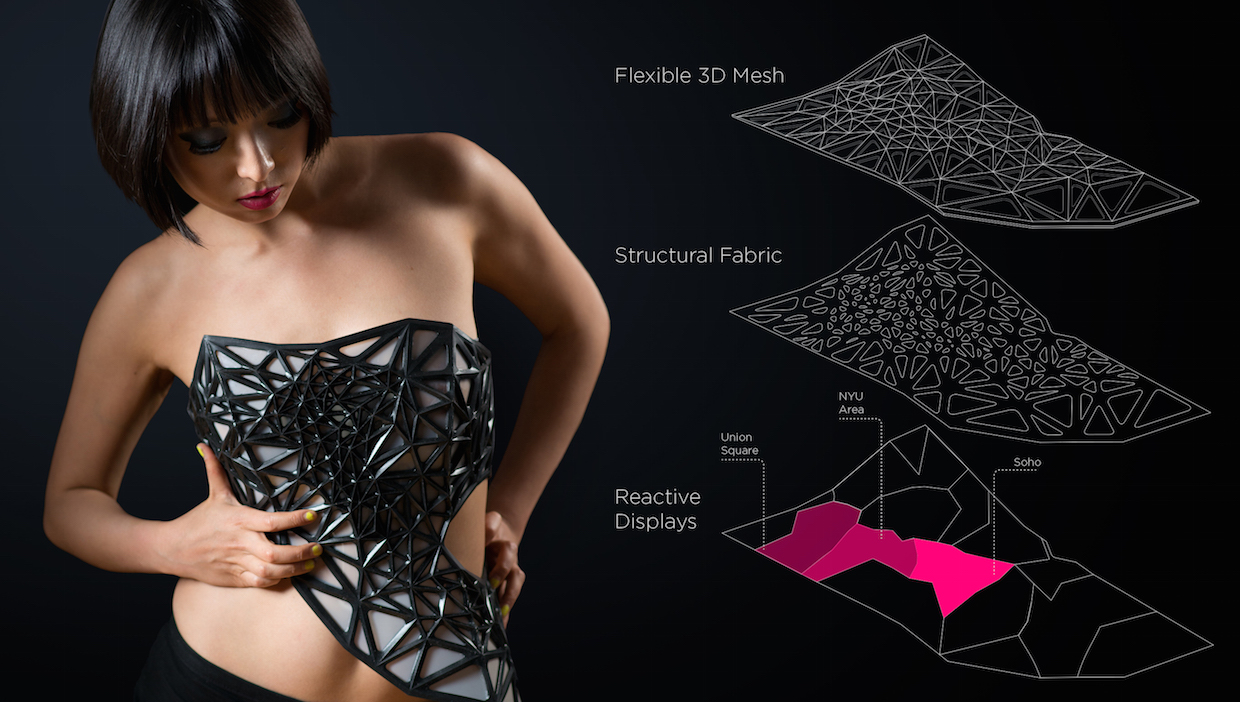

Second: the recorded data set was used as the basis for the generative aspects of the personalized wearable couture. The output is an abstract 3D mesh armature of my location data points collected over about a month. The dataset was fed into processing to produce the pattern and exported to Rhino to make the 3D mesh.

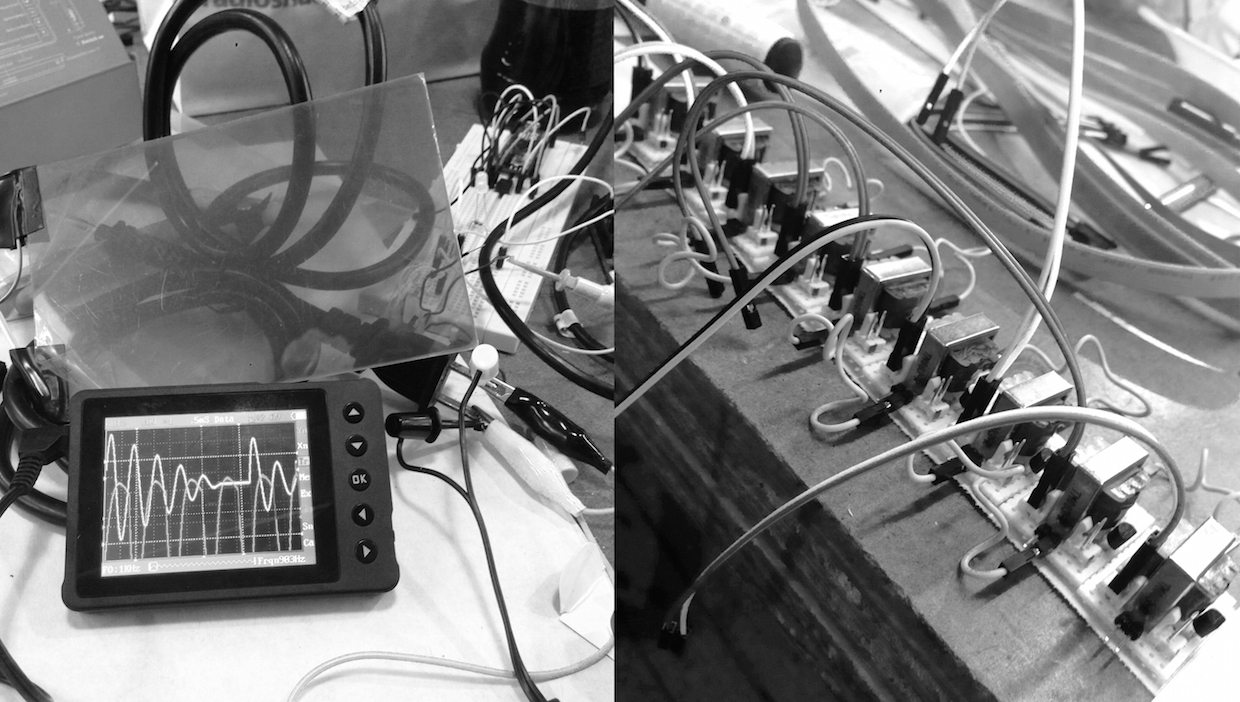

Lastly, the mobile app and server is used to provide real-time data transmission through bluetooth to an Arduino, which controls reactive displays that change in opacity to reveal the wearer’s skin. This occurs in proportion to the volume of information that is passively generated.

X.pose was a collaboration of Designers Xuedi Chen and Pedro G. C. Oliveira

Photography By: Roy Rochlin

Model: Heidi Lee

Makeup: Rashad Taylor

Featured on FastCompany, Wired UK, AnimalNY, The Creators Project, CNET, iO9, NOTCOT, Ars Technica, DesignBoom, Engadget, Huffington Post, ELLE, and more!

YouFab Global Creative Awards - Winner, 2014

Exhibited at the Perfect Bodies | Rebellious Machines show in Mexico City - 2017, HopeX - 2014 & Engadget Expand - 2014.